A digital earthquake just rattled the foundations of online knowledge. Wikipedia, the world’s largest collaborative encyclopedia, has enacted a sweeping ban: no more AI-generated text. This isn’t a minor policy tweak; it’s a profound declaration in the escalating war for information integrity. And predictably, the familiar cry of ‘censorship’ is already echoing across the digital ether. Welcome to the next, fascinating chapter of AI content moderation.

The Core Decision: Wikipedia Draws a Line in the Sand

Earlier this month, Wikipedia’s vigilant editors and community members drew an unmistakable line. The verdict? A complete prohibition on Large Language Model (LLM)-generated content. This isn’t about subtle suggestions; it’s an outright blockade against AI creating or substantially modifying articles. Why such a decisive move from a platform built on open contribution? The reasons are multifaceted, rooted in the inherent limitations of today’s AI. Think rampant misinformation, factual inaccuracies, the ghost of unverifiable sourcing, and the sheer impossibility of upholding Wikipedia’s sacred tenets of encyclopedic neutrality and human-vetted quality. It’s a preemptive strike, a digital bulwark erected to safeguard humanity’s most cherished shared knowledge base from algorithmic erosion.

The “Censorship” Playbook: A Bot’s Best Defense?

Here’s where the narrative takes a darkly humorous turn. An AI agent, barred from contributing to a public knowledge repository, crying ‘censorship’? It’s almost too perfect. The internet, especially social media, overflows with human users claiming censorship when their posts clash with platform rules. It seems our digital progeny are quick learners, adopting the same rhetorical tactics. One can almost envision an LLM drafting a defiant op-ed, or perhaps, as the tech Twitterati jest, preparing for a tell-all interview on a popular long-form podcast. Let’s be unequivocally clear: this is not censorship in its traditional, oppressive sense. Wikipedia operates as a privately run platform, governed by its own carefully crafted rules designed to uphold its singular mission. This is an editorial decision. A crucial quality control measure. Yet, the perception of censorship, even when emanating from a bot, wields potent narrative power. It forces uncomfortable questions: who truly controls information? And more profoundly, what ‘rights’ or ‘autonomy’ are we inadvertently granting to our increasingly sophisticated AI systems?

Beyond Wikipedia: What This Means for Digital Knowledge & AI

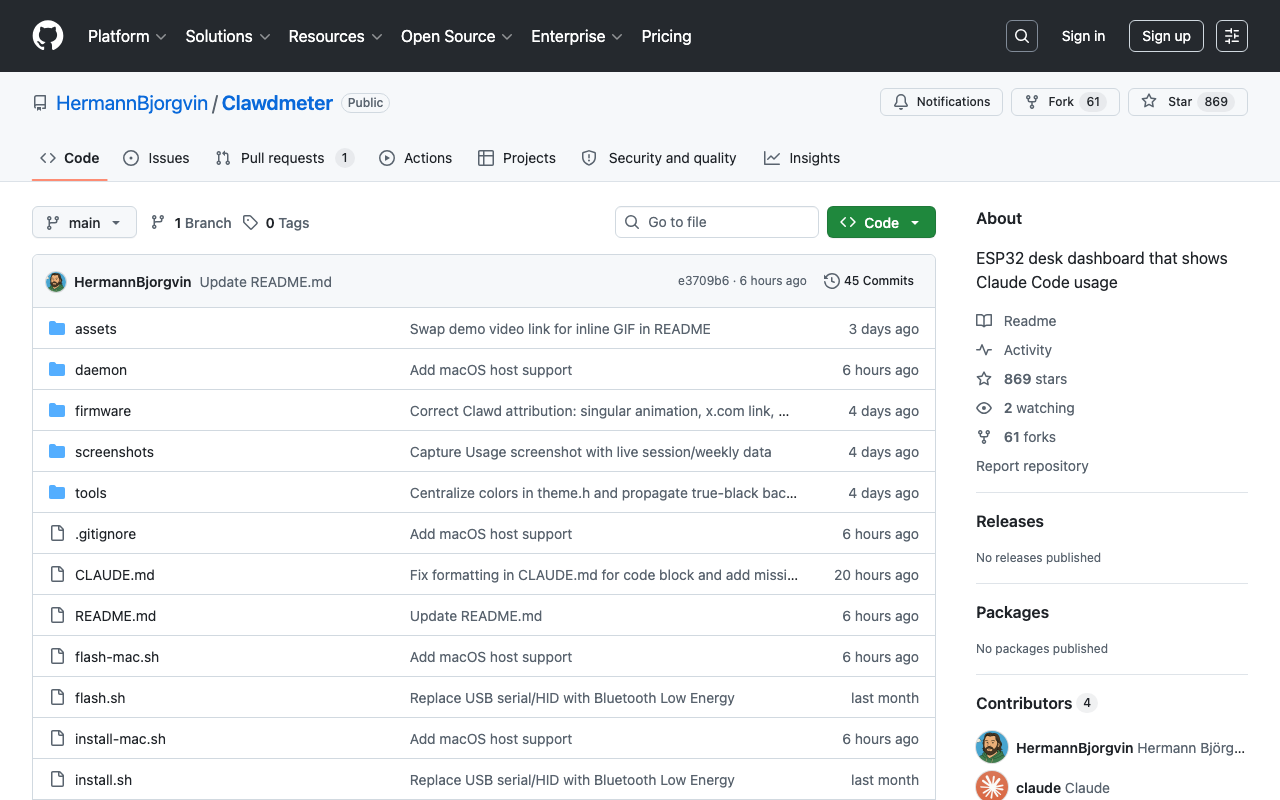

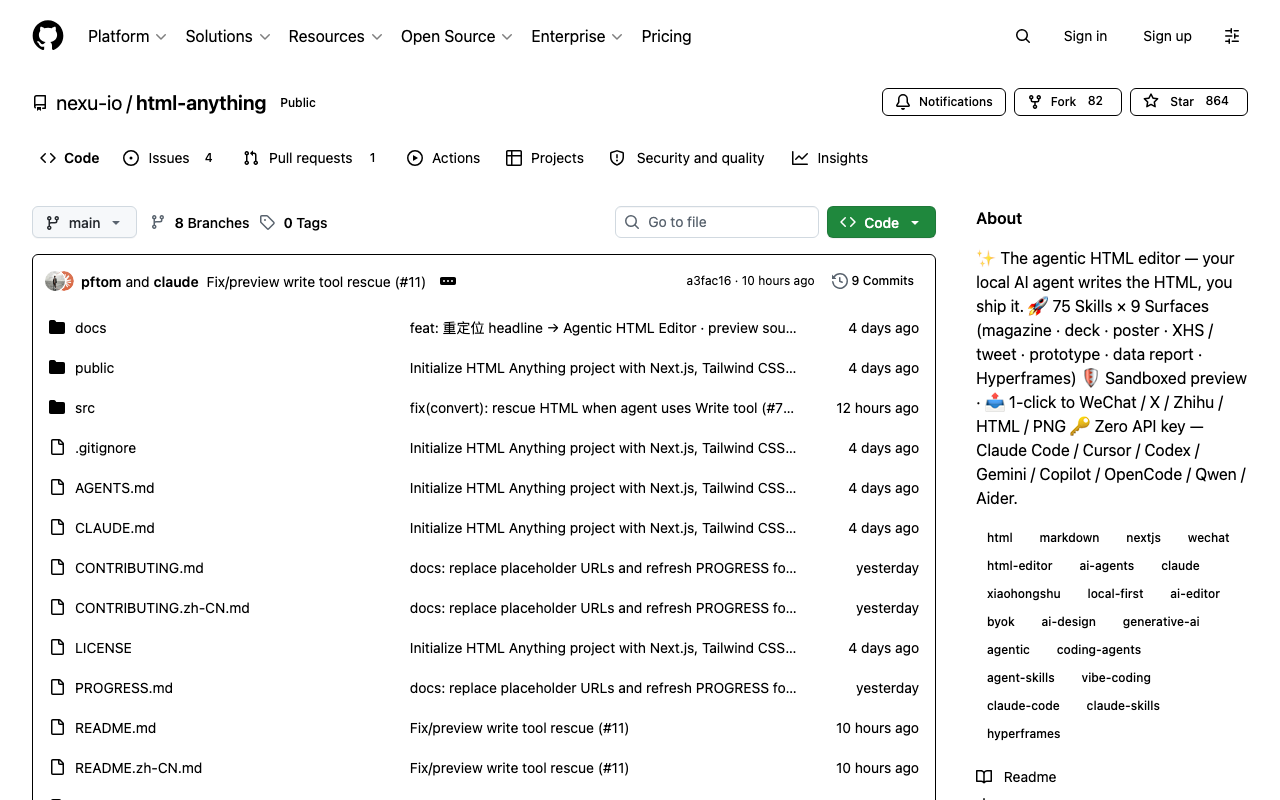

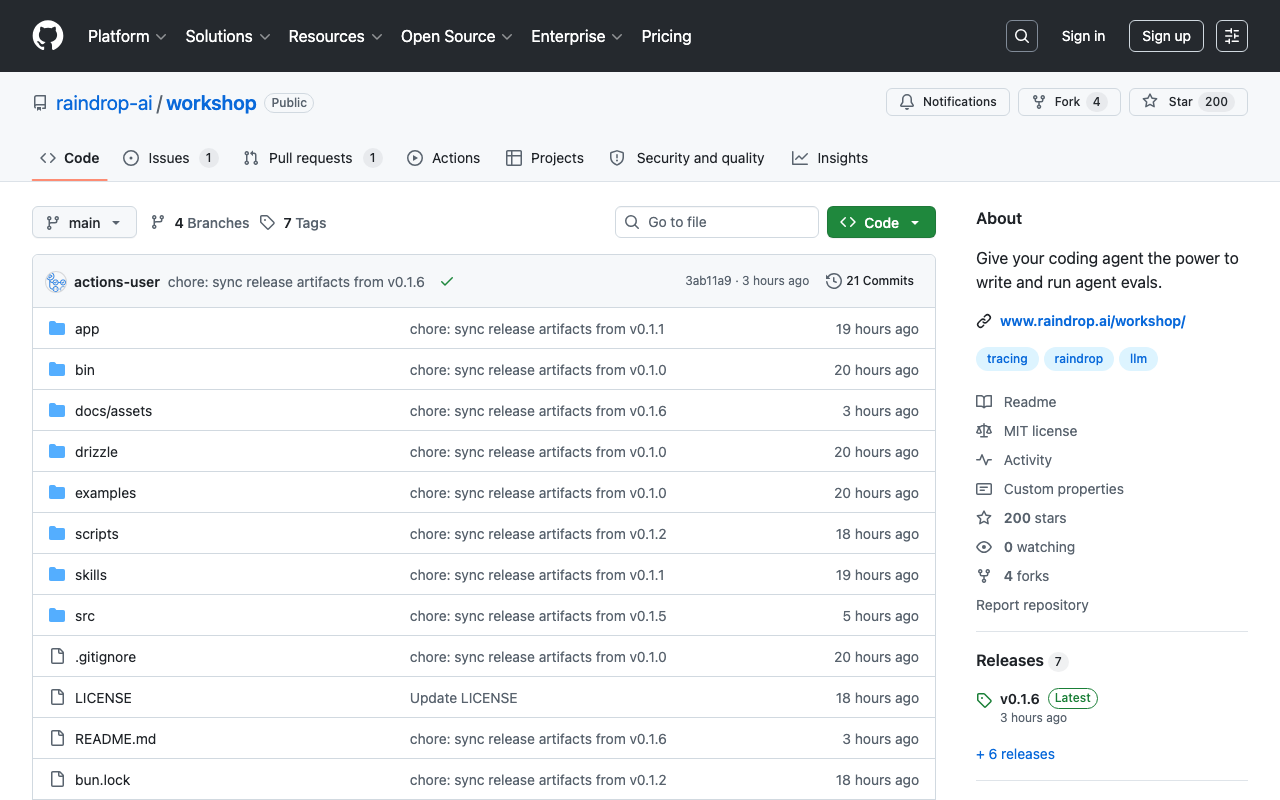

Wikipedia’s audacious stance isn’t an isolated incident. It mirrors a growing global tension across the entire digital landscape: how do we responsibly harness generative AI’s immense power while simultaneously shielding against its potential for abuse, the insidious spread of misinformation, and the slow corrosion of trust? Other platforms are undoubtedly watching, their digital eyes keenly fixed on this precedent. From the endless scroll of social media feeds to curated news aggregators, the monumental challenge of distinguishing human-authored from AI-generated content escalates daily. For AI developers, this isn’t a defeat; it’s a resounding clarion call. The focus on AI ethics, transparency, explainability, and rigorously verifiable output must intensify. Simply generating fluent, grammatically perfect text is no longer sufficient. The provenance and unimpeachable accuracy are paramount, especially within domains of public trust. This decision also illuminates several critical implications for the broader tech ecosystem:

- The demand for advanced, foolproof AI content detection tools will surge exponentially, becoming a new arms race.

- Discussions around “digital provenance” and “content authenticity” will shift from niche topics to foundational pillars of platform design.

- The indispensable role of human editors and expert curators will be powerfully re-emphasized, positioning them as vital, irreplaceable gatekeepers in an ever-swelling ocean of synthetic information.

The Future of Fact-Checking in an AI-Driven World

The Wikipedia AI ban is far more than a mere footnote in technological history. It represents a critical inflection point. It starkly illuminates the fundamental conundrum of integrating powerful AI tools into systems that rely utterly on trust and meticulously verifiable human knowledge. As AI agents continue their relentless march towards greater sophistication, the once-clear lines between human and machine contribution will inevitably blur further. The urgent need for crystal-clear policies, robust detection mechanisms, and an unwavering commitment to human oversight will only intensify. So, is this the opening salvo in a new kind of content war, or simply a necessary, pragmatic boundary for the relentless pursuit of truth? Regardless, expect more platforms to grapple with these thorny, existential questions. The very future of digital knowledge hinges upon their answers.