谷歌TPU的四大“不对称优势”:芯片战争的真正玩法

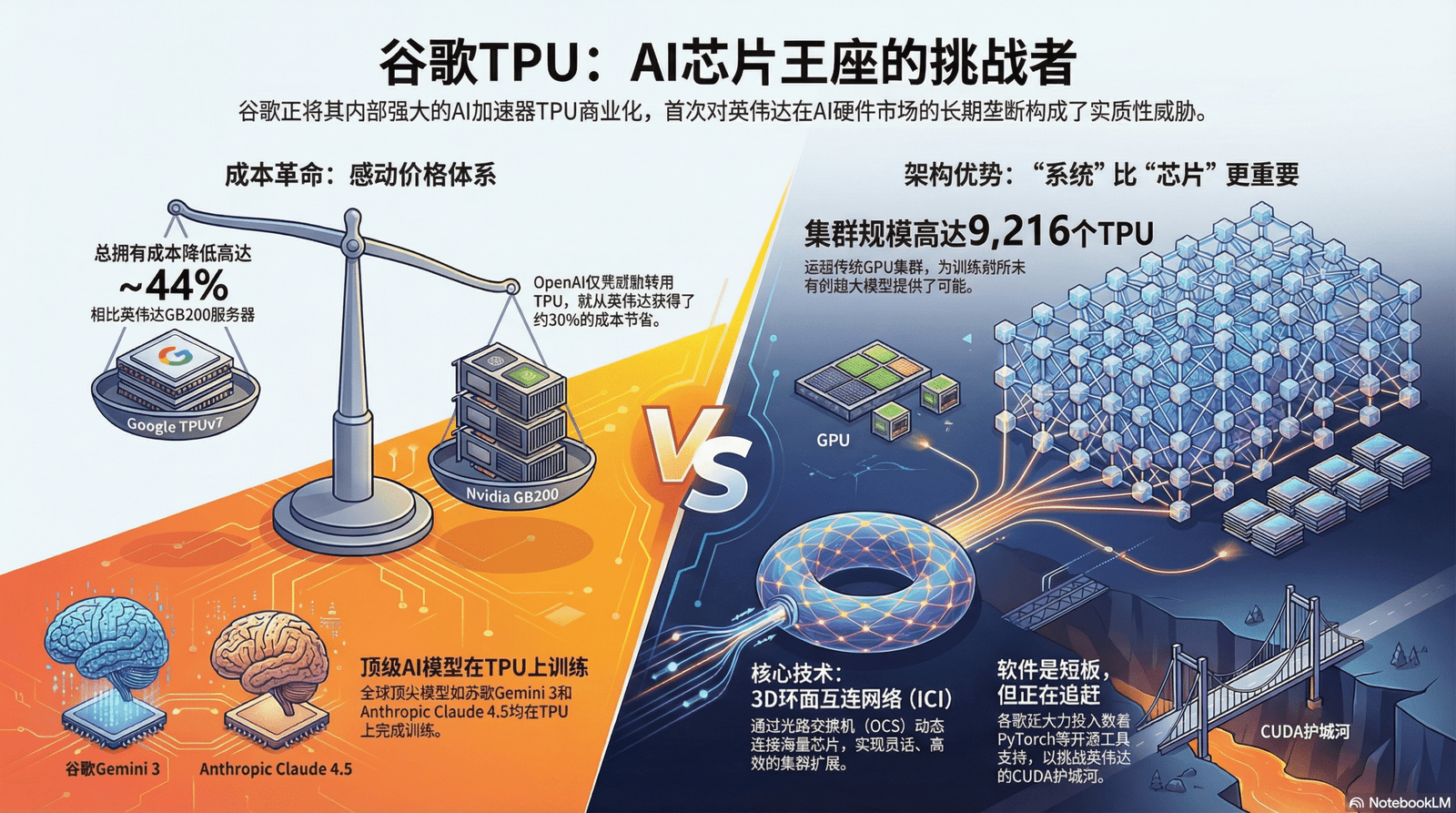

在AI硬件领域,谷歌的TPU正迅速成为对抗英伟达的强劲对手,改变市场格局。TPU不仅借助其系统架构优势和更高的实际运行效率,还通过创新金融模式挑战传统规则,提升竞争力,逐渐重塑AI基础设施的未来。

在AI硬件领域,谷歌的TPU正迅速成为对抗英伟达的强劲对手,改变市场格局。TPU不仅借助其系统架构优势和更高的实际运行效率,还通过创新金融模式挑战传统规则,提升竞争力,逐渐重塑AI基础设施的未来。

不少宝子想尝试用低代码平台玩玩workflow或者rag,但是Coze、Dify和N8N这些平台应该怎么选?各自特点是什么?到底哪个适合你?老兵帮宝子们分析一下。 📌不扯犊子,不卖关子,先说老兵总结的结论:Coze = Bot as a S...

本报告旨在提供一个推理算力需求从用户渗透到 Token 调用、再到硬件支出的分析框架,我们通过对 Google 与微软(OpenAI)未来 Token 调用量、算力总需求和未来硬件支出节奏的测算,得出结论:推理算力需求增长速度快于单位算力成...

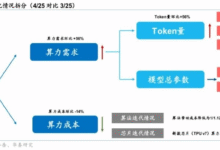

由于价值错位和成本压力,传统的定价方式正在失效。软件公司对全新颠覆性定价模式的需求比以往任何时候都更高涨。 最近,国外科技作者 Kyle Poyar 收集了超过 240 家软件公司的数据,这些公司的年经常性收入(ARR)在 100 万至 2...

文丨丁灵波 最近几天,AI圈正迎来一轮技术冲击波,只因今年的微软Build开发者大会和谷歌I/O开发者大会撞期了,两大科技巨头在AI领域的布局各有千秋,对于全球开发者而言,又有一大批AI工具扑面而来。美国西雅图当地时间5月19日,微软Bui...

今天凌晨,奥特曼突然发文宣布推出自家最新的 o 系列模型:满血版 o3 和 o4-mini,同时表示这两款模型都可以自由调用 ChatGPT 里的各种工具,包括但不限于图像生成、图像分析、文件解释、网络搜索、Python。 总的来说,就是比...

2025 年版的 AI 50 强名单显示了公司如何使用代理和推理模型来承担真实的企业工作流程 人工智能将在 2025 年进入一个新的阶段。多年来,AI 工具主要根据命令回答问题或生成内容,而今年的创新是关于 AI 真正完成工作。2025 年...

问 open ai 那20 个工作最有可能被 AI 所取代。看看O3 的回复.. 温馨提醒:信息来自AI 生成,娱乐为上,切莫上头 提问:列出20个OpenAI的GPT-4o推理模型可能会取代人类的工作,并按概率排序。 O3 回答:我将研究...

DeepSeek-R1以始料未及的速度引发了全球AI社区的狂热,但有关DeepSeek的高质量信息相对匮乏。2025年1月26日,拾象创始人兼CEO李广密,组织了一场关于 DeepSeek的闭门讨论会,嘉宾包括数十位顶尖AI研究员、投资人与...

就在今天,豆包APP发布全新更新,实时通话功能迎来全新的交互体验,语音更加拟人化,更加自然,已经完全贴近于人的情感语音。 几个小时后DeepSeek-R1发布,直接对标OpenAI o1,实力直接拉满,DeepSeek-v3已经受到广大用户...

Forget the frustrating memories of paper jams and exorbitant ink cartridges. For decades, traditional printers have barely limped along, offering incremental updates that felt more like apologies than innovations. But what if Anker, the brand behind reliable power banks and intuitive smart home devices, just ignited a true revolution in printing? They're not just entering the market; they're promising a printer that can print on literally anything.

Enter the Anker EufyMake E1, an ultraviolet (UV) printer poised to yank 'printing' from the digital dark ages. This isn't a mere upgrade; it's a paradigm shift. Imagine laying down vibrant, durable ink and instantly curing it on canvas, wood, metal, plastic, glass, ceramics, or even leather. While such groundbreaking technology naturally carries a premium price, the critical question remains: Is the EufyMake E1 the creative liberation small businesses, artists, and serious DIY enthusiasts have been desperately awaiting?

The collective groan surrounding printers is palpable. Decades of marginal improvements and proprietary ink wars have left users frustrated, with devices often feeling more like expensive paperweights than productivity tools. The EufyMake E1 shatters this stagnation. It challenges this head-on by leveraging UV printing technology. Unlike conventional printers that deposit wet ink which then needs to dry (often smudging or bleeding), UV printers employ specialized inks. These inks are cured almost instantaneously by focused ultraviolet light, transforming liquid into a solid, durable layer. This precise, rapid process creates vibrant, abrasion-resistant prints that adhere flawlessly to an astonishing variety of non-porous and porous surfaces. The possibilities are truly transformative:

This isn't merely about printing a document. It's about unlocking unprecedented customization and creation. The EufyMake E1 empowers individuals and small businesses to transform ordinary objects into personalized masterpieces, effectively bringing small-scale manufacturing and bespoke product creation within reach of any home studio or workshop.

While specific technical details beyond its core UV capability remain under wraps, the EufyMake E1's mere existence, backed by Anker, speaks volumes. Anker's reputation for user-friendly, reliable tech, even in sophisticated lines like Eufy smart home products, strongly suggests the E1 will offer an approachable interface. This design philosophy aims to dramatically lower the barrier to entry for what was once a complex, industrial-only printing method.

Imagine: You design an intricate pattern on your computer, then effortlessly print it onto a ceramic mug, a leather wallet, or even a golf ball. The results are stunning: impressive durability, vibrant color fidelity, and a finish that feels integrated, not just applied. This capability far surpasses the limitations of sticker application or heat transfers, delivering a genuinely premium, professional-grade result.

For graphic designers, artists, and crafters, the EufyMake E1 isn't just a tool; it's a catalyst for entirely new revenue streams and boundless creative avenues. Product designers can achieve rapid, high-quality iteration on physical prototypes in-house, eliminating costly, time-consuming outsourcing. This device fundamentally redefines what's achievable within a home studio or small workshop.

Let's address the obvious: the Anker EufyMake E1 will carry a significant premium. This isn't surprising. It's a groundbreaking device democratizing a technology previously confined to industrial settings. While large-scale UV printers have long existed, their price tags often soared into the tens or even hundreds of thousands of dollars, demanding specialized training and dedicated environments. The EufyMake E1 aims to bring this advanced capability to a prosumer market.

The EufyMake E1 represents a meticulously engineered, scaled-down version of this advanced industrial technology, tailored for the prosumer. Its price tag directly reflects several key factors:

So, is this investment justified? For the casual user seeking to print standard documents or a few family photos, unequivocally no. However, for a small business aiming to offer truly unique, custom-branded products, an artist eager to explore new mediums, or a serious hobbyist driven by boundless creative potential, the EufyMake E1 transcends mere gadgetry. It's an investment in unparalleled capability, market differentiation, and the potential to unlock entirely new revenue streams and artistic expressions previously unattainable.

The Anker EufyMake E1 isn't merely a new printer; it's a profound statement. It signals a seismic shift in how we perceive digital fabrication and personalization. This device could be the spark igniting a fresh wave of innovation, compelling other manufacturers to pursue similar advancements, making sophisticated printing technologies more accessible and, eventually, more affordable for everyone.

We are witnessing a fascinating inflection point in technology. Anker is clearly positioning itself not just as a hardware innovator, but as a potent enabler of truly boundless creativity. While its premium price point will undoubtedly give many pause, the EufyMake E1's transformative potential is undeniable. Perhaps, at long last, the era of printer-induced headaches is drawing to a close, replaced by the exhilarating prospect of printing on virtually anything, anywhere.

Imagine your car transforming into a mobile command center, a brainstorming partner, or a personal tutor. No more fumbling with phones; ChatGPT is now live on your Apple CarPlay dashboard, revolutionizing in-car AI. This isn't merely an update; it's a seismic shift, courtesy of Apple's iOS 26.4. This foundational update specifically unlocked the 'voice-based conversational apps' API within CarPlay, flinging open the gates for AI powerhouses like ChatGPT to become your indispensable digital co-pilot.

Ready to upgrade your commute? The process is refreshingly simple, especially if you're already plugged into the Apple ecosystem:

Meet these two prerequisites, and ChatGPT will seamlessly appear on your CarPlay dashboard. Suddenly, the boundless knowledge and sophisticated conversational abilities of ChatGPT are just a voice command away, completely hands-free.

This isn't about adding another icon. This is about fundamentally redefining the driving experience. How does a powerful AI like ChatGPT truly elevate your time behind the wheel?

This isn't merely "play music" or "get directions." This is about injecting genuine, intelligent conversation and complex problem-solving directly into your vehicle, safely and intuitively.

The arrival of ChatGPT on Apple CarPlay transcends a single app; it signals a monumental shift. Apple's strategic pivot to embrace third-party voice-based conversational apps is a tectonic move. Expect a cascade of other generative AI chatbots to follow suit, morphing our dashboards into intelligent command centers, each vying to be your ultimate digital co-pilot.

Naturally, this evolution isn't without its speed bumps. Data privacy concerns, the fine line of voice-command distraction, and refining complex user interactions will undoubtedly emerge as crucial discussions. Yet, the horizon gleams with immense potential: unparalleled convenience, amplified safety, and a truly intelligent, connected driving experience.

The definition of 'smart car' isn't just expanding; it's exploding. Are you ready for an AI co-pilot on your next adventure?

Imagine capturing a breathtaking moment, only to have AI subtly rewrite its details. This isn't science fiction; it's the imminent reality of smartphone photography, with the Samsung Galaxy S26 poised to lead an unprecedented AI revolution. As our devices evolve into sophisticated digital alchemists, a critical question looms: are we truly enhancing our cherished memories, or are we, in our pursuit of photographic 'perfection,' inadvertently 'sloppifying' the genuine essence of what transpired? This isn't just a tech debate; it's a philosophical tightrope walk, and the Galaxy S26 is about to push us further along its edge.

The groundwork for this AI transformation was arguably laid by the Google Pixel series, particularly with features like Magic Eraser and Photo Unblur. Google's initial foray into AI editing was subtle: a slightly bluer sky, a magically vanished photobomber from a pristine landscape. These tools, lauded for their convenience, promised effortless image refinement. Yet, as capabilities expanded – think 'Best Take' or generative fill – the line between enhancement and outright fabrication began to blur. Things got, unmistakably, weird.

Google's evolution of AI within its Photos app isn't just a feature roadmap; it's a cautionary tale. What started as intelligent background tweaks morphed into profound reality-bending tools. This wasn't merely applying a filter; it was rewriting the visual narrative with a few taps. Suddenly, users possessed the power of digital alchemy, reshaping fundamental image elements. Creative freedom soared. But so did the ethical tightrope's sway. The appeal is undeniable: professional-grade photo editing, once reserved for experts, is now democratized. Anyone can craft a stunning visual story. Yet, this accessibility births a paradox: the simpler it becomes to alter an image, the more we question the authenticity of any image. This precarious stage is precisely where the Galaxy S26 is poised to make its dramatic entry.

The unsettling notion of 'sloppifying memories' doesn't imply poor image quality. Quite the opposite. It signifies making them less faithful to the original moment. When AI seamlessly adds a missing friend, removes an unwanted stranger, or even subtly shifts a frown into a smile, are we still documenting life as it happened? Or are we meticulously crafting a revised, idealized narrative? A poignant observation rings true: "Photos are whatever you want them to be, I guess." This encapsulates the core dilemma. Empowerment surges: minor imperfections vanish, creative visions materialize, good photos become great. But this power also erodes the bedrock of authenticity. If every image can be flawlessly curated, does the raw, unedited snapshot – blemishes, unexpected expressions, and all – lose its intrinsic value? For a generation whose lives are increasingly digital chronicles, this isn't just a question; it's an existential query.

Samsung, a titan of innovation and Google's fierce competitor, is unlikely to merely mimic the Pixel's AI prowess. The Galaxy S26 is expected to catapult AI photo editing into uncharted territory, offering tools that are not only more intuitive and powerful but potentially far more controversial, integrated seamlessly into its native camera app.

These advancements, while technologically dazzling, will inevitably intensify the authenticity debate. Will Samsung opt for transparency, clearly labeling AI-generated elements, or will it prioritize an unblemished, 'perfect' user experience? The answer will shape public trust and define our collective embrace of this new digital alchemy.

As the Galaxy S26 prepares its launch, ushering in a new era of AI-powered visuals, consumers and creators alike confront a profound choice. These tools grant unprecedented power to sculpt our visual narratives, to fabricate images that perfectly mirror our idealized visions. But with this power comes a weighty responsibility: to comprehend the implications of every 'perfect' pixel we generate and share. Will the relentless pursuit of flawlessness lead us into a future where our digital memories are less faithful chronicles of reality, and more curated fantasies of what we wished had been? Or can we, with deliberate intent, learn to wield these potent AI tools thoughtfully, using them to subtly enhance, rather than outright replace, the raw, beautiful authenticity that makes life's moments truly unforgettable? The Galaxy S26 is more than just a smartphone; it's a technological marvel, a harbinger of AI's pervasive influence, and a mirror reflecting the evolving, complex relationship we're forging with our digital pasts.

The future of art isn't just arriving; it's already enrolled in art school. A profound, often uncomfortable, shift is gripping creative education: artificial intelligence isn't an elective anymore; it's a core discipline. As a former design student, I vividly recall the mix of pride and a creeping sense of dread my baby brother feels navigating his 3D modeling and animation studies. This tension stems from a fundamental truth now taking hold: like it or not, AI is now an undeniable part of art school curriculums.

The days of debating AI's place in art are over. Major institutions worldwide are actively teaching aspiring creatives how to utilize artificial intelligence, integrating it into everything from concept development to final production. This isn't just a fringe course; it's becoming a foundational component, preparing the next generation of artists, designers, and animators for a rapidly evolving professional landscape.

For many, this reality is a bitter pill to swallow. Yet, the push from educational bodies to incorporate AI tools isn't arbitrary. It stems from a very practical need: preparing students for the real world. Creative industries – be it film, gaming, advertising, or product design – are already leveraging AI for various tasks. From generating initial concept art and storyboards to automating tedious tasks like texture generation, rigging, or even initial animation cycles in 3D, AI is proving to be a powerful, if controversial, accelerator – a digital alchemist speeding up the creative process.

Ignoring this shift would be a profound disservice to students who will soon enter a job market where proficiency with these tools might not just be an advantage, but an absolute necessity. Art schools, therefore, find themselves in a challenging position: to uphold traditional artistic values while simultaneously equipping students with the cutting-edge skills demanded by industry. It's a delicate tightrope walk.

So, what does "utilizing AI" actually mean in an art school setting? It's not about replacing human creativity entirely, but rather about augmenting it. Students are learning to use AI for:

However, this integration is far from seamless. The ethical implications loom large, casting a long shadow. Concerns about copyright infringement, the homogenization of artistic styles, and the potential for job displacement are valid and widely discussed. How do educators guide students through a landscape where tools sometimes scrape copyrighted data, risk homogenizing aesthetic styles, or threaten traditional livelihoods? These are not easy questions.

It's no secret that a significant portion of the creative community, including students and faculty, harbor deep reservations, even outright hatred, for AI art. Their arguments are often rooted in a passionate desire to protect the sanctity of human creativity, the irreplaceable value of craft, and the livelihoods of artists who dedicate years to honing their skills.

This internal resistance presents a unique challenge for educators. How do you motivate students to learn tools they view as a threat? How do you foster a learning environment that encourages innovation while also addressing legitimate ethical and philosophical objections? This tension is palpable on many campuses, leading to important, albeit often heated, debates about the very definition of "art" in the age of algorithms. It’s a battle for the soul of creativity.

Redefining what it means to be a creative professional in the 21st century is at the heart of AI's inclusion in art school curriculums. It requires a nuanced approach that emphasizes critical thinking, ethical reasoning, and a deep understanding of artistic principles alongside technical proficiency with AI tools. The goal isn't to create artists who simply prompt-engineer their way to a career, but rather to cultivate highly skilled individuals who can harness powerful technologies responsibly and innovatively.

The future of creativity, it seems, won't be about AI versus artists, but about how artists choose to partner with AI, shaping its development and application to serve human expression. AI isn't just a tool; it's becoming a new kind of collaborator, a digital apprentice that can accelerate ideation and execution. What are your thoughts on AI in art schools? Is it a necessary evil, or a powerful new brushstroke in the artist's palette?

April 1st isn't just a date; it's a looming cyber storm warning. Iran's Islamic Revolutionary Guard Corps (IRGC) has explicitly named tech giants like Apple, Google, and Microsoft as direct targets, marking a chilling escalation in nation-state cyber warfare. This isn't a drill. It's a direct ultimatum, poised to send shockwaves through global digital infrastructure. What does this mean for your organization, your data, and the interconnected tech ecosystem? Prepare for impact.

The IRGC's declaration transcends a vague warning; it's a calculated, direct threat with a specific April 1st commencement. Their stated intent: initiate disruptive and data exfiltration attacks against over a dozen American companies, particularly those with significant Middle East operations. Motivation? Perceived retaliation. While geopolitical tensions frequently spill into cyberspace, explicitly naming global tech behemoths like Apple, Google, and Microsoft elevates the stakes dramatically. A direct hit isn't just about localized disruption. These firms form the bedrock of global digital infrastructure. Imagine a crack in the foundation of a skyscraper; the entire structure's integrity is compromised. A successful breach could trigger cascading failures across supply chains, compromise vast swathes of data, and destabilize countless critical services we rely on every second.

For cybersecurity professionals, IT leaders, and anyone vested in digital safety, this threat serves as a chilling reminder of cyber warfare's relentlessly evolving nature. It spotlights several critical vulnerabilities:

Ask yourself: Are your organization's digital ramparts robust enough to withstand the inevitable fallout from such high-profile, nation-state-backed assaults?

While primary responsibility falls on the explicitly targeted firms, this event is a universal wake-up call. Proactive, immediate measures are not optional; they are paramount:

The April 1st deadline transcends a mere calendar entry; it's a critical inflection point for heightened vigilance. Whether successful attacks materialize or not, the IRGC's explicit warning itself constitutes a profound event. It compels organizations to fundamentally re-evaluate their defenses and forces governments worldwide to grapple with the implications of unconstrained, state-sponsored cyber aggression. For the professional tech audience, this isn't abstract news from a distant conflict. It's a visceral reminder: cybersecurity is a continuous, relentlessly evolving battle, inextricably intertwined with global geopolitics. The digital ramparts safeguarding our data and infrastructure face relentless assault. The stakes? Higher than ever before. Staying informed, proactive, and resilient is not merely advisable; it is the absolute imperative for survival in this volatile new era of geopolitical cyber threats.

Duolingo CEO Luis von Ahn, the visionary behind CAPTCHA and reCAPTCHA, has delivered a radical message to the tech world: he wants to 'delete' blockchain. This isn't casual banter. From the architect of systems that verify billions of human interactions daily, this pronouncement isn't just provocative; it's a direct challenge to the industry's fervent embrace of decentralized technologies. Why would a mind so adept at building internet infrastructure declare war on Web3's foundational technology?

Von Ahn, whose innovations like reCAPTCHA digitized over 2.5 million books daily and thwarted hundreds of billions of spam messages, isn't known for dismissing new tech lightly. He builds solutions. So, what drives his strong, even incendiary, stance against blockchain and the broader crypto ecosystem?

Luis von Ahn has forged a career on creating incredibly useful, widely adopted technologies. CAPTCHA, for all its user friction, was an indispensable bulwark against spam and bot attacks. reCAPTCHA then cleverly repurposed those human validations to digitize vast libraries. This is a man who understands how to construct internet infrastructure that solves undeniable problems.

His skepticism towards blockchain isn't academic; it's a fundamental challenge: Where are the real-world, scalable problems blockchain genuinely solves today? Not theoretical promises, but tangible, widespread utility. While proponents tout decentralization, immutable ledgers, and novel economic models, von Ahn's pragmatic lens, as reported, highlights a perceived chasm between grand vision and practical application beyond speculative finance. He's not airing a personal grievance; his critique is rooted in the same "does it work?" metric he applied to CAPTCHA: Does it provide tangible, broad-audience value? For blockchain, in its current iteration, he evidently sees significant shortcomings.

It's deeply ironic that the inventor of a system designed to verify human users in a trustless online environment is so dismissive of a technology often lauded for its ability to build trust without intermediaries. But perhaps that's the very crux of his argument.

When someone with such a strong track record of making the internet *work better* voices such a pointed opinion, it's a stark reminder that not all innovation is created equal, nor does it always follow a predictable, immediately useful path. The "solution looking for a problem" analogy often applies here, from his perspective.

Von Ahn's comments serve as a crucial gut-check for the entire tech industry. In an era where "Web3," "decentralization," and "tokenomics" are often buzzwords, his pragmatic approach forces us to ask tough questions:

Are we building genuine utility, or merely constructing elaborate digital castles in the air, fueled by speculative fervor? Is the promise of blockchain truly delivering on its revolutionary potential, or are we still largely confined to a niche, experimental phase?

Crucially, this isn't about some personal vendetta or frustration from lost crypto keys—the original context explicitly states his opinion has "nothing to do with the crypto he lost the password for." His critique is purely technical, utility-focused, and rooted in a deep understanding of internet-scale problem-solving.

Luis von Ahn's position isn't necessarily a death knell for blockchain. However, it's a powerful signal from a highly respected, results-driven voice in tech. It signals a growing impatience among seasoned tech leaders for demonstrable substance over speculative hype when it comes to emerging technologies.

As professionals, we should heed such critiques. They compel us to demand more, to innovate more responsibly, and to focus our energies on solutions that genuinely improve the world, rather than just create new markets for speculation. Perhaps von Ahn's bold declaration will inspire more rigorous critical examination and a renewed focus on building truly impactful, practical applications for any technology, blockchain included. The future of innovation hinges on this discernment.